Containment is not a strategy

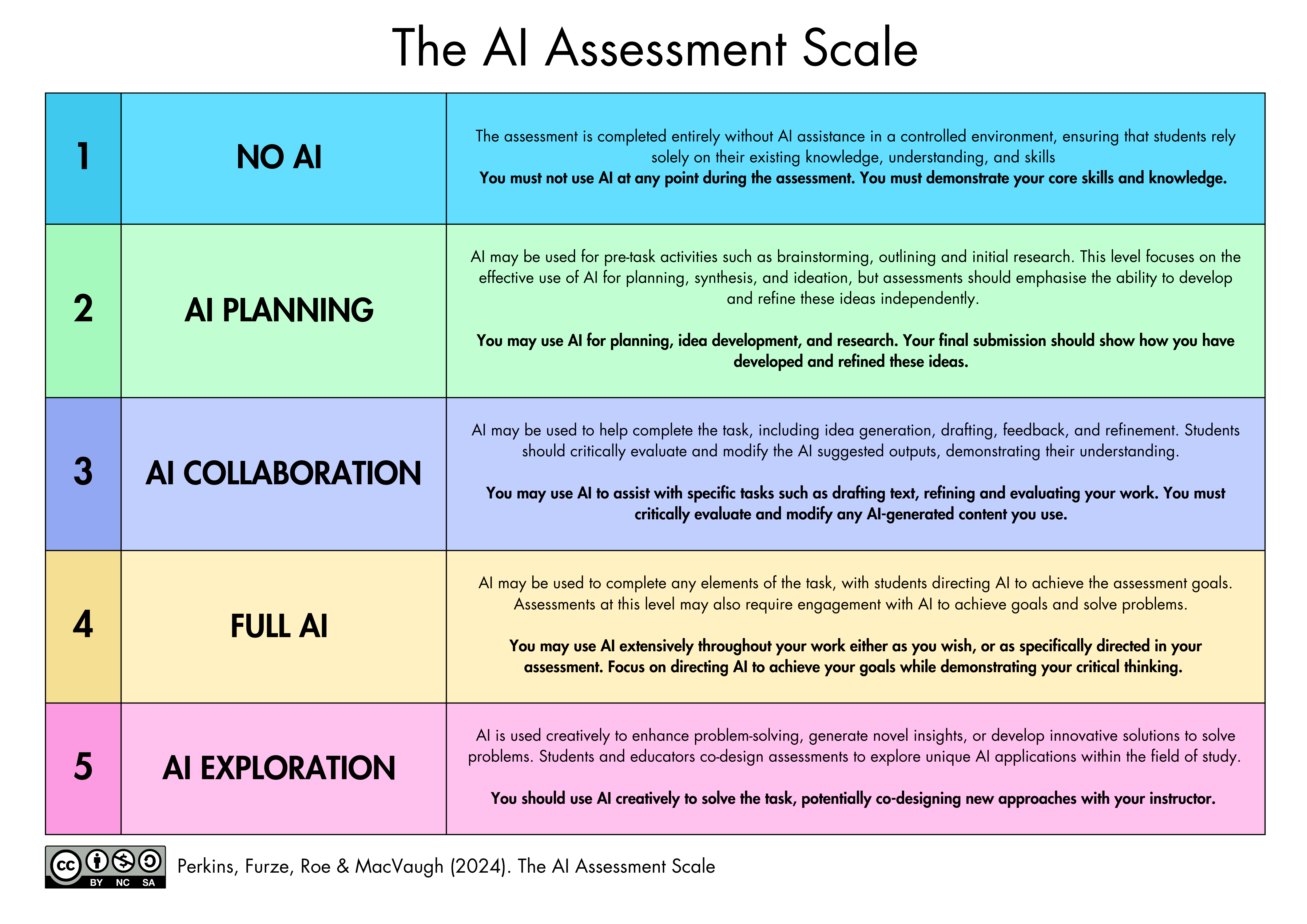

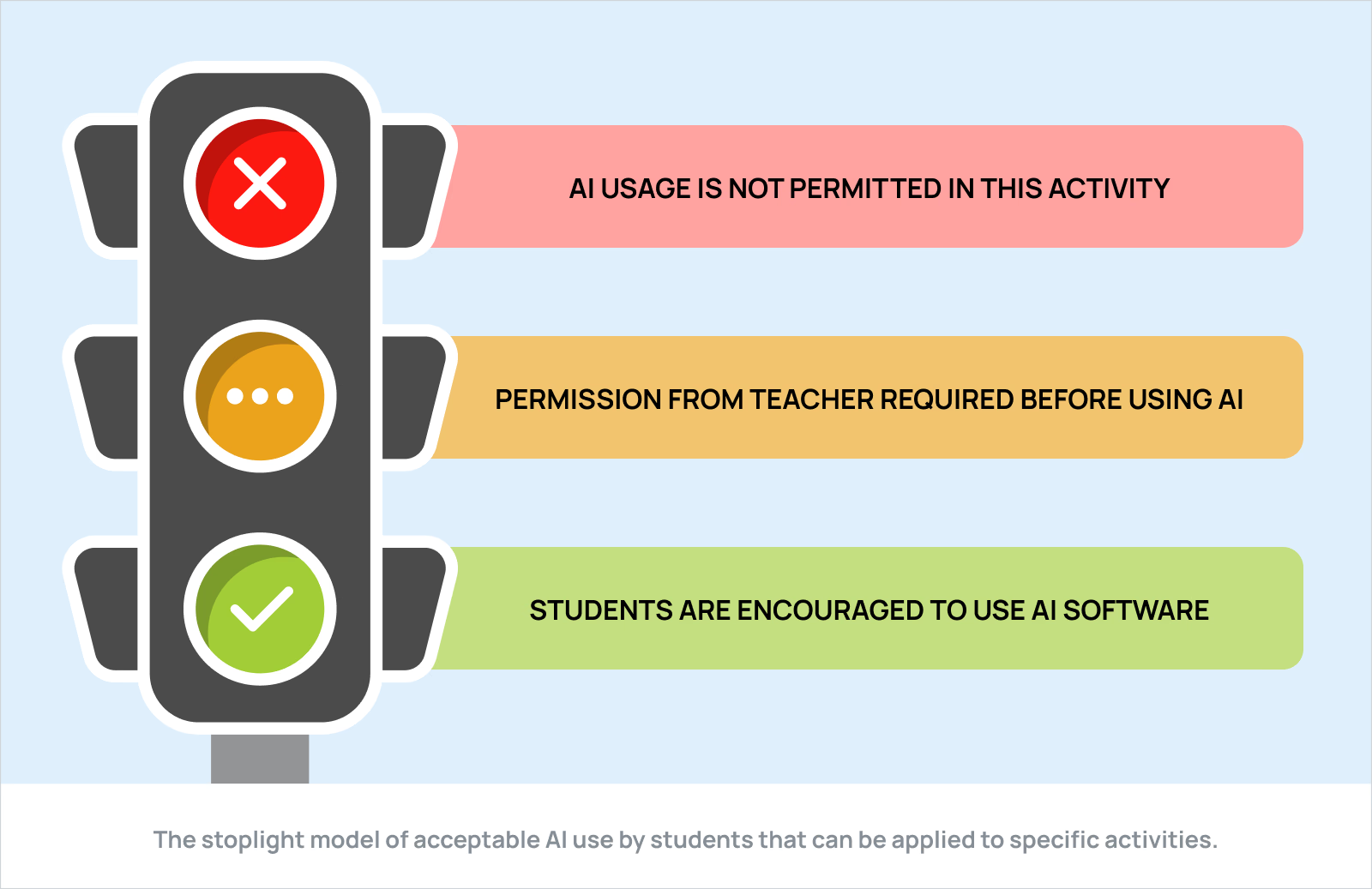

Higher education’s dominant response to AI — traffic light policies, the AI Assessment Scale, detection tools — is a taxonomy of containment, not a framework for change. It takes the existing assessment architecture as given and asks how to protect it, rather than whether it remains fit for purpose. Until that changes, most institutional AI policy will be an elaborate effort to preserve a system whose foundations were already uncertain.

Something’s been nagging at me about the way higher education is responding to AI. Not any single policy or framework, but a quality they seem to share. An orientation that, once seen, is difficult to unsee. Whether it’s a traffic light system colour-coding permissible AI use, the AI Assessment Scale ranking assessments by their susceptibility to AI completion, a revival of oral examinations, or an investment in detection tools, the response is structured the same way. The position is defensive and the goal is to protect the existing system against a destabilising force.

| AI Assessment Scale | Traffic light system |

|---|---|

|  |

That’s worth naming clearly, because it shapes what kind of conversation we’re having, and whether it’s the right one.

The AI Assessment Scale, for instance, asks where and how AI may be used in an assessment. Traffic light policies state where AI should be permitted, tolerated, or banned. Both are taxonomies of containment and control. Both take the existing assessment architecture as given and ask how it can be defended or categorised in relation to AI. I haven’t seen any framework or policy that starts with the question of whether the assessment architecture itself remains fit for purpose. None ask what valid evidence of learning looks like when AI is ambient rather than exceptional.

This isn’t a new institutional reflex. When the internet arrived, higher education first resisted, and eventually domesticated it into the virtual learning environment; a tool that reproduced the lecture hall and the filing cabinet in digital form, rather than genuinely reconsidering what learning infrastructure could be when organised as digital networks. We seem to be attempting something similar now i.e. trying to domesticate and tame this technology to force it into our existing educational paradigm.

But containment strategies don’t help develop the practitioners we actually need: healthcare professionals who exercise genuine judgement about how to work with AI well. Context sovereignty — the capacity to think critically about what you bring to an AI interaction and what you take from it — isn’t developed by telling students which assessments are AI-free zones. Taste and judgement in the use of AI tools aren’t cultivated by compliance with a colour-coded policy.

The conversation worth having isn’t how to protect current assessment structures from AI. It’s what assessment should be doing when AI is part of the professional landscape our graduates are entering, and whether what we currently do bears any meaningful relationship to that. Until that becomes the dominant framing, most institutional response to AI amounts to an elaborate defence of a system that’s no longer fit for purpose, pursued with increasing urgency at precisely the moment when we could be building something better.