Language models can reshape information, not just generate it

A static document and an interactive tool can contain the same information but invite completely different kinds of engagement. Language models make that kind of transformation trivially easy, and the capability is worth exploring.

Most conversations about language models focus on what they generate: text, images, code. But they can also transform existing content, for example reshaping a static document into something interactive. This post is a concrete example of what that looks like.

Transforming a Word document into a web app

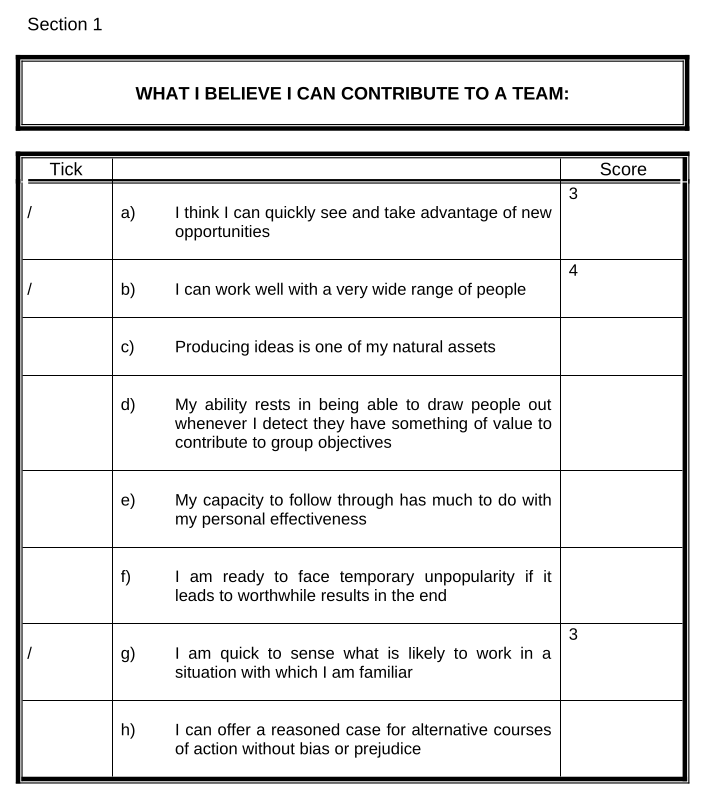

A few weeks ago I was in a project management course. The facilitator asked us to complete the Belbin self-perception questionnaire, a tool for identifying the roles people naturally tend to play in teams. It arrived as a 21-page Word document: a wall of tables, tick boxes, and scoring grids that we were expected to work through by hand.

The original questionnaire: a wall of tables and scoring grids.

The original questionnaire: a wall of tables and scoring grids.

I uploaded the document to Claude and typed a single sentence:

Prompt

Convert this into an interactive form.

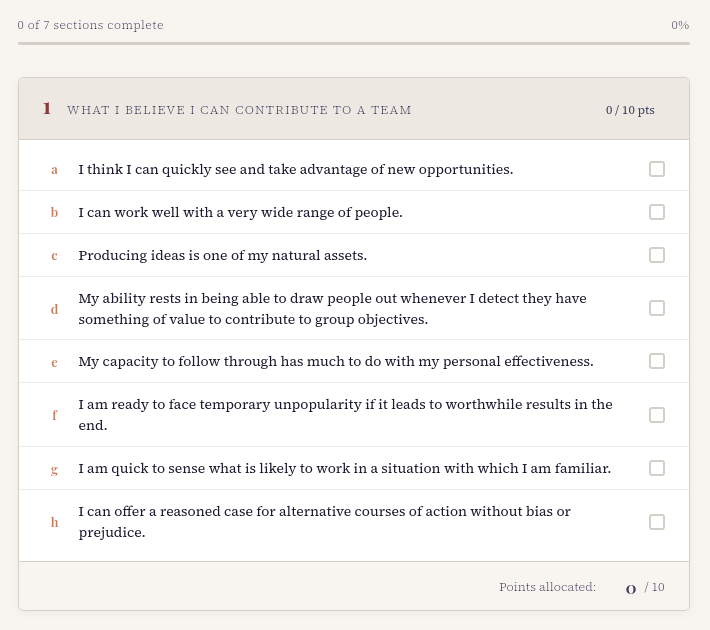

Within a minute or so, I had a fully functional web app: styled, tabbed, and ready to use.

The same questionnaire, transformed into an interactive web app.

The same questionnaire, transformed into an interactive web app.

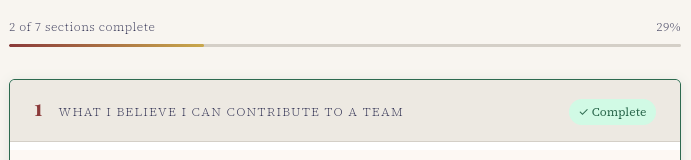

The model inferred design decisions I never specified: a progress indicator at the top of the form, and visual confirmation when a section is completed. None of this was in my prompt.

Progress tracking and completion markers — inferred, not specified.

Progress tracking and completion markers — inferred, not specified.

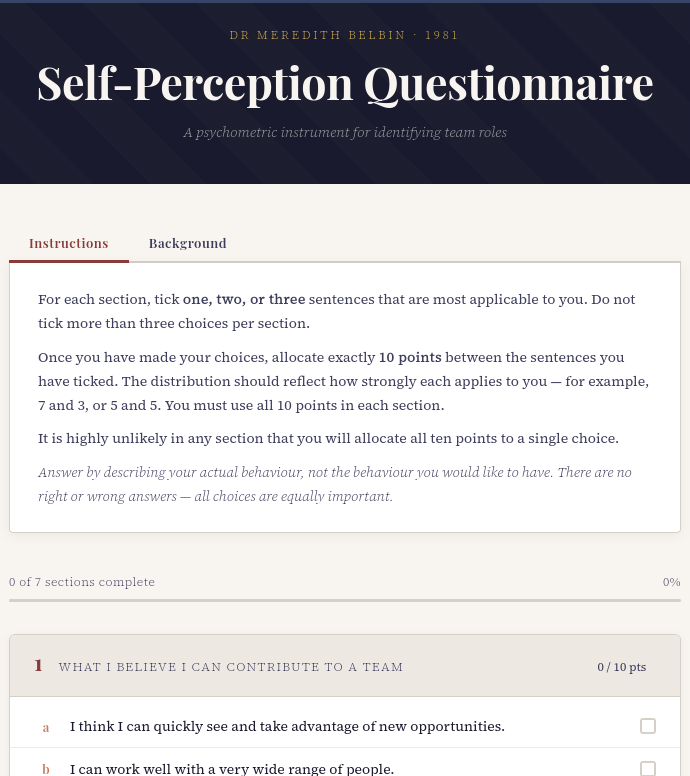

I subsequently asked it to add a Background tab explaining the questionnaire context alongside the instructions.

A Background tab added on request, alongside the instructions.

A Background tab added on request, alongside the instructions.

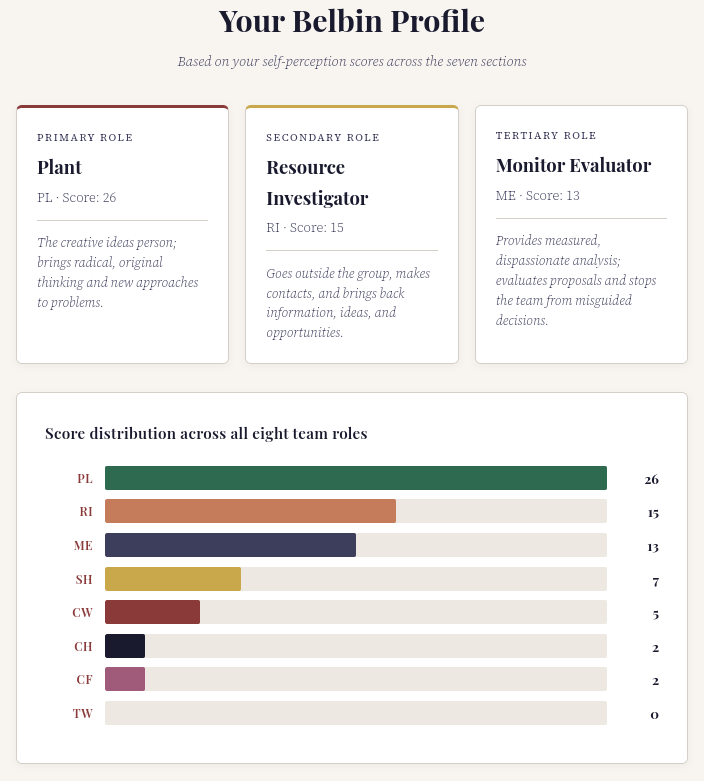

I completed the questionnaire in the browser, downloaded my results, and was done. The whole process took about ten minutes, which was significantly faster (and more enjoyable) than working through the Word version. There are still some minor issues I could tidy up e.g. the accent colour on the role descriptors below don’t match the colours in the score distribution graph.

The completed results page with team role scores.

The completed results page with team role scores.

Claude built everything into a single HTML file, styling included. That also meant I could host the form directly on this site, so you can try the interactive Belbin questionnaire.

Same content, different shape

I think of this as information transformation: not generating something new, but reshaping what already exists into a form that’s more useful for the task at hand. Using NotebookLM to convert a research paper into a podcast is a great example of what’s possible. And once you start looking, it’s a pattern that shows up in a lot of places.

What’s easy to miss is how low the barrier has become. I didn’t write any code, specify any design, or do anything more than describe what I wanted in plain language. A document and a single sentence were the input. A working tool was the output.

Format shapes engagement

The format something takes doesn’t just affect convenience. It also affects cognition. A static document and an interactive form contain the same information, but they invite different kinds of engagement. That’s a meaningful difference when the goal is learning, decision-making, or self-assessment.

Think about the documents you already work with: assessment rubrics, clinical guidelines, programme specifications, accreditation standards. Most of them are dense, static, and designed for compliance rather than engagement.

What would happen if you transformed one of them into something interactive and shared it with your students or colleagues?